Overview

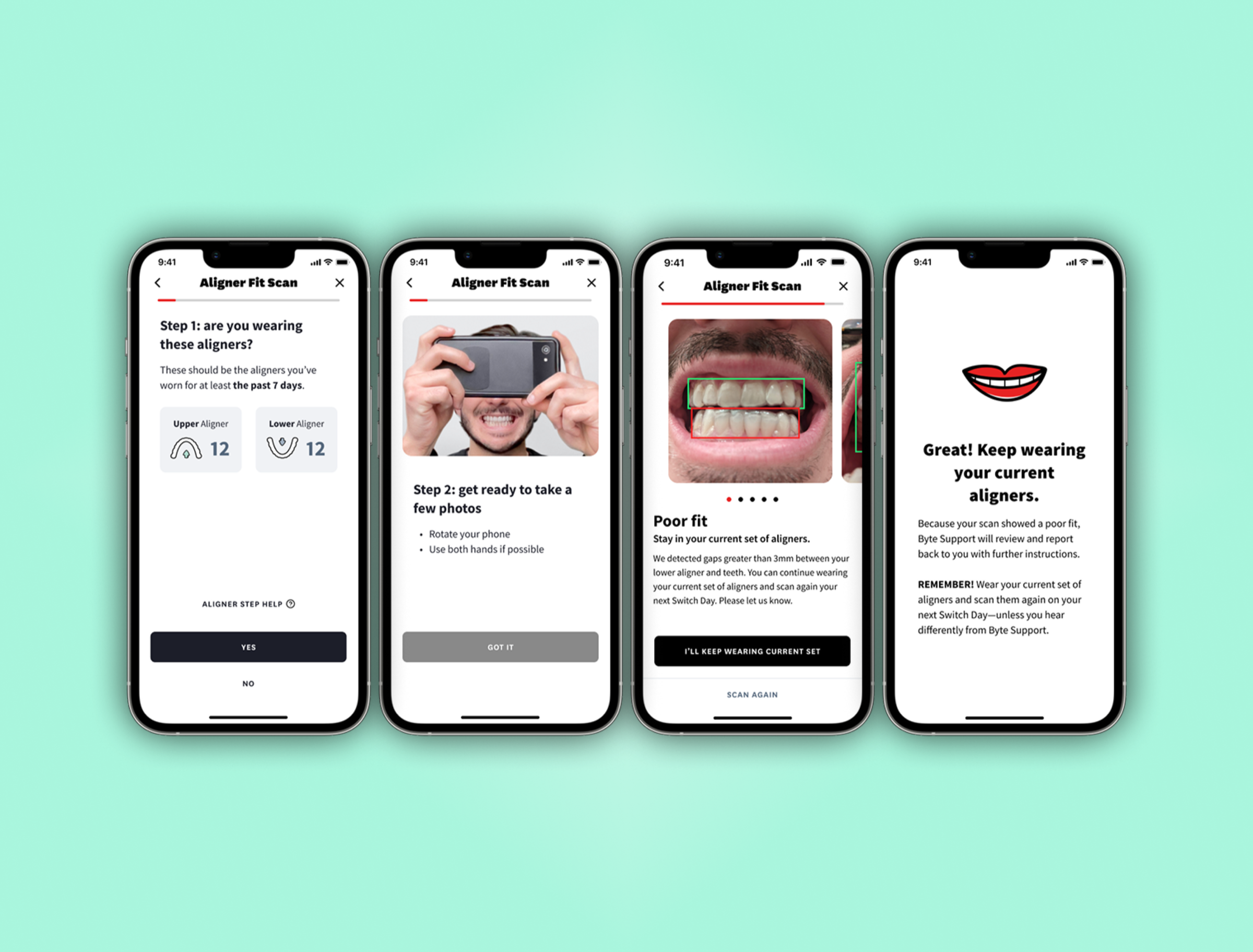

Byte's standard clear aligner prescription is 7 days per tray. After a week, the app reminds users to switch to the next tray, incrementally shifting teeth toward a straighter smile. But teeth rarely comply 100% of the time.

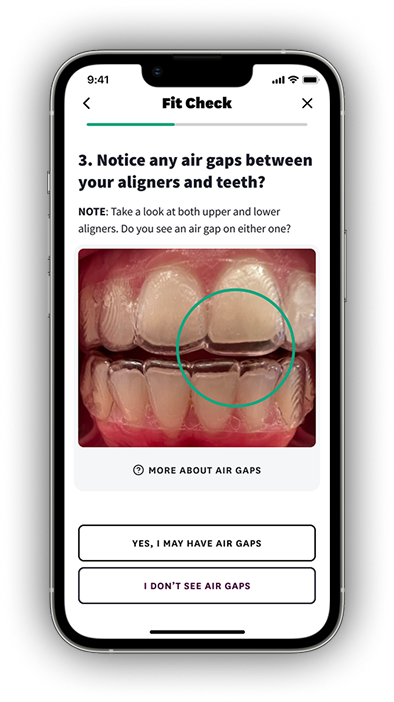

Users were following the plan, but we had no process to verify if aligners fit well enough to switch, and users didn't always trust their own judgment. Yet switching prematurely can lead to tooth or gum injury, damaged aligners, and delayed treatment.

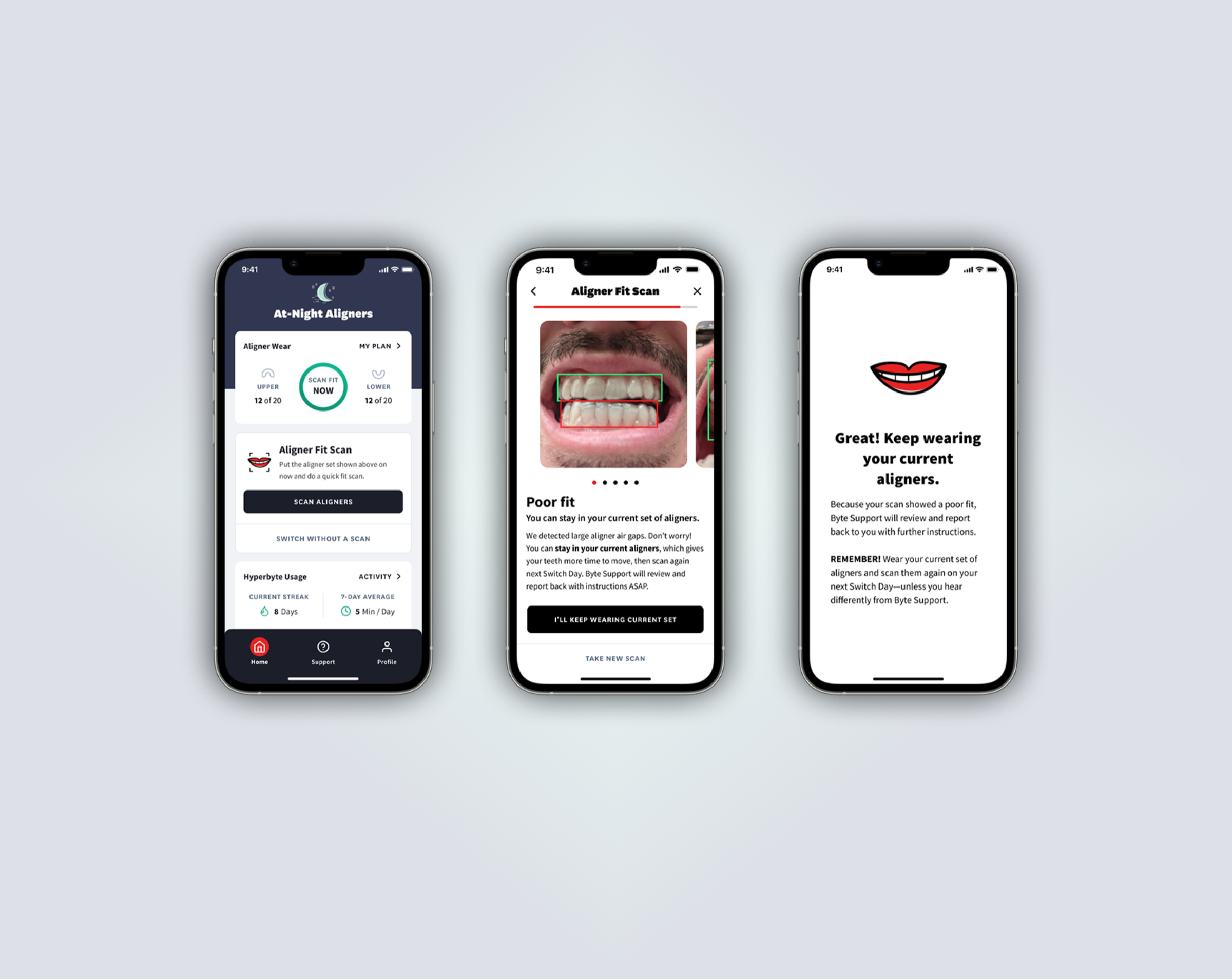

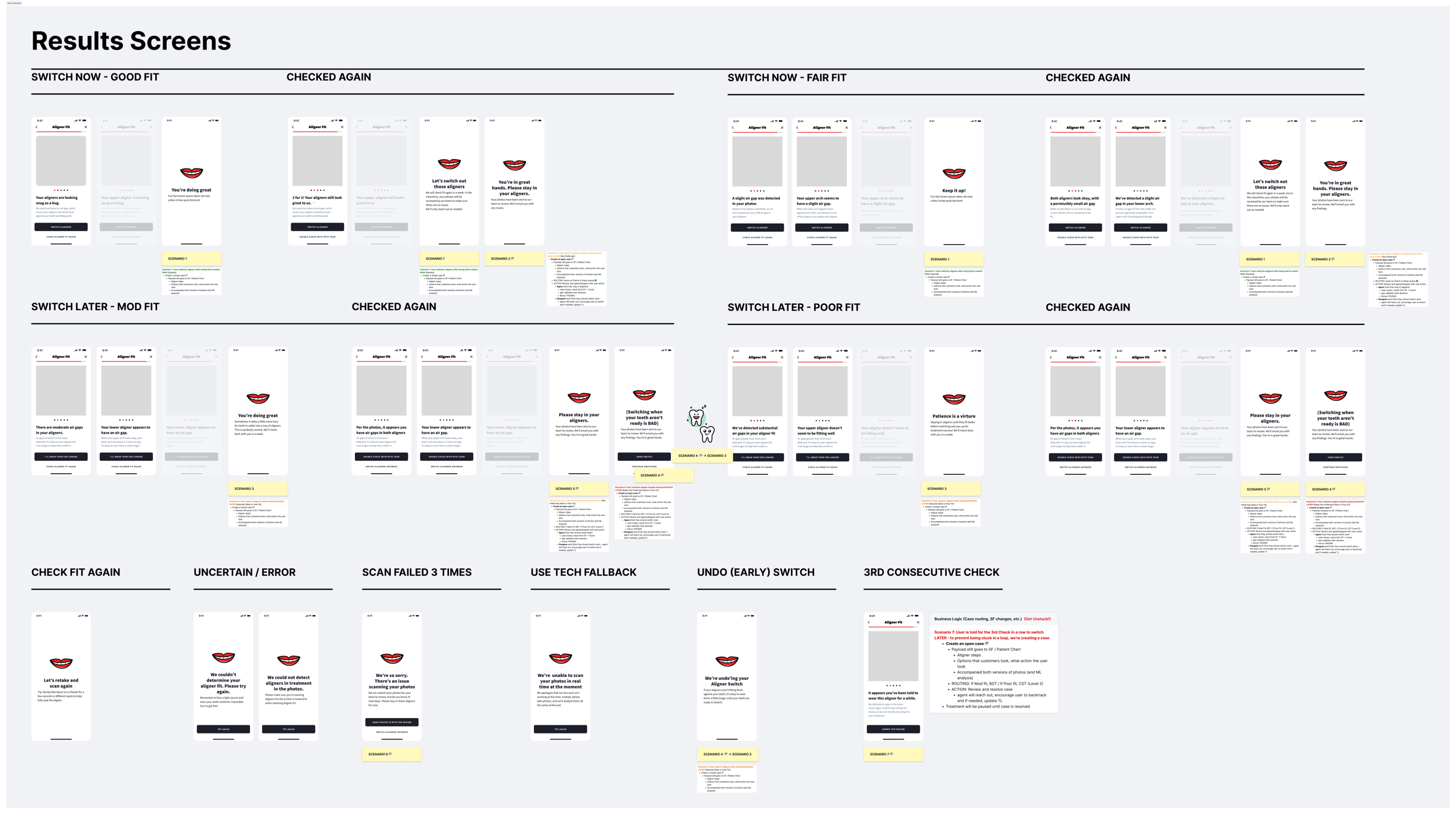

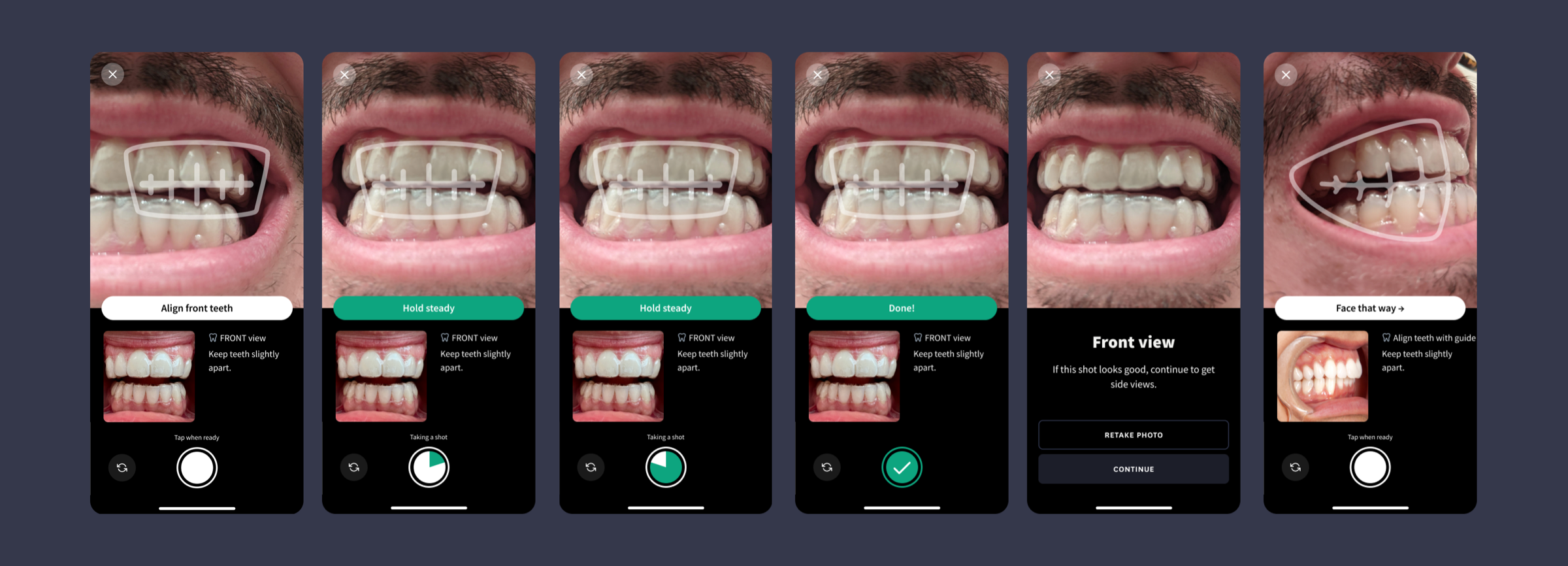

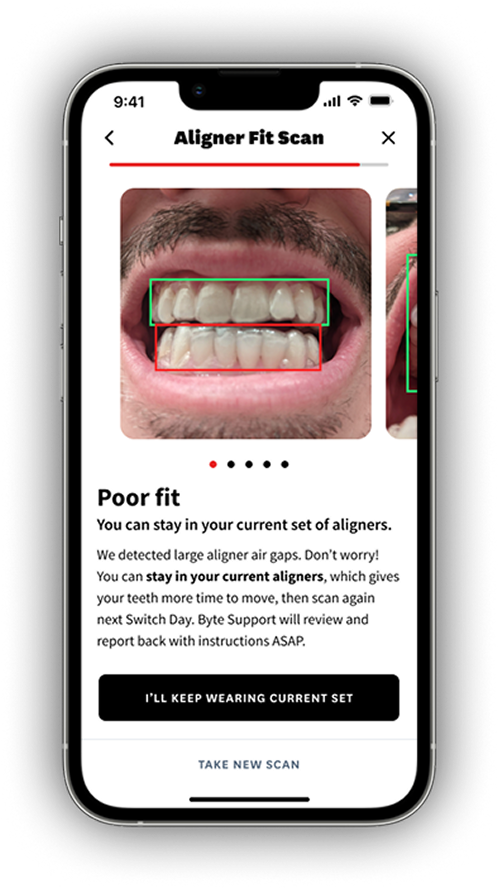

What if we could green-light good-fitting aligners after their prescribed wear time, and escalate poor-fitting cases to our clinical team?

I led the product strategy for an ML computer vision feature that would deliver instant fit assessments, giving users confidence as they progressed through treatment and improving their outcomes.