Overview

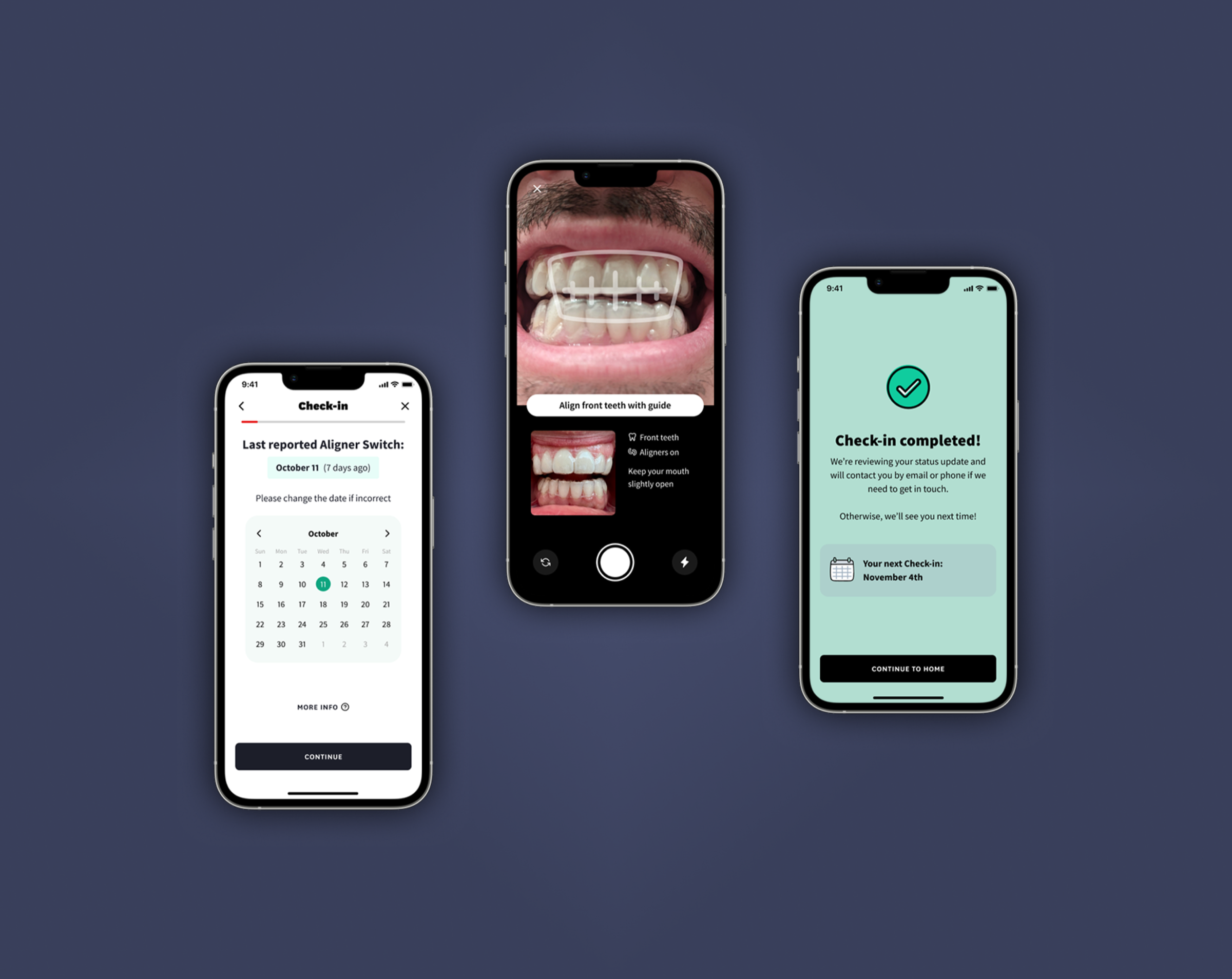

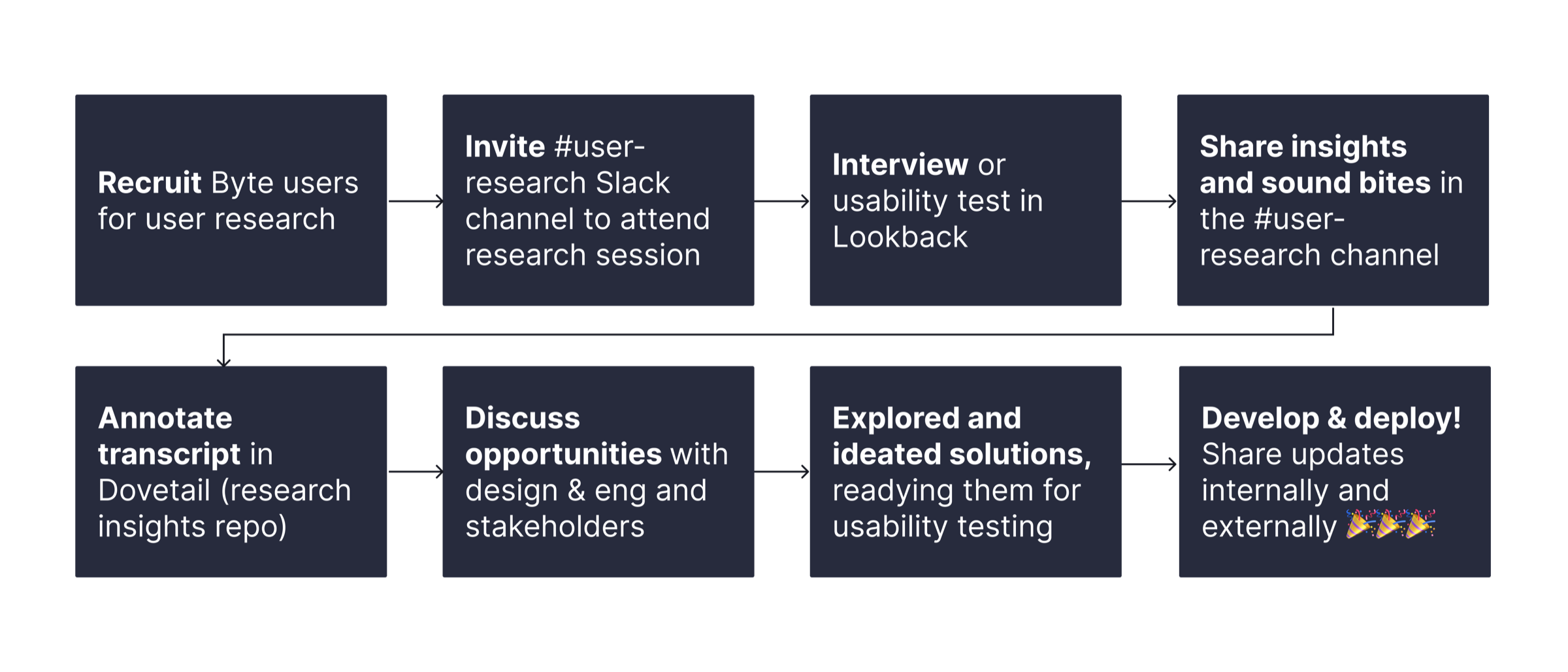

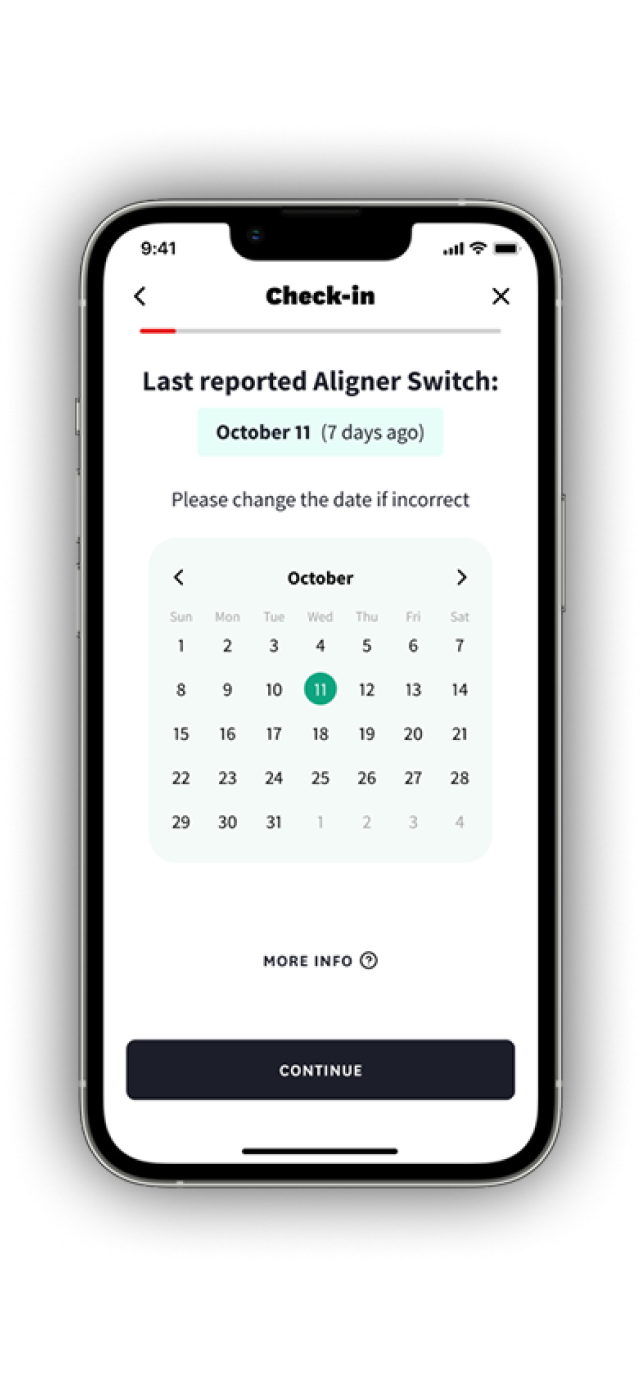

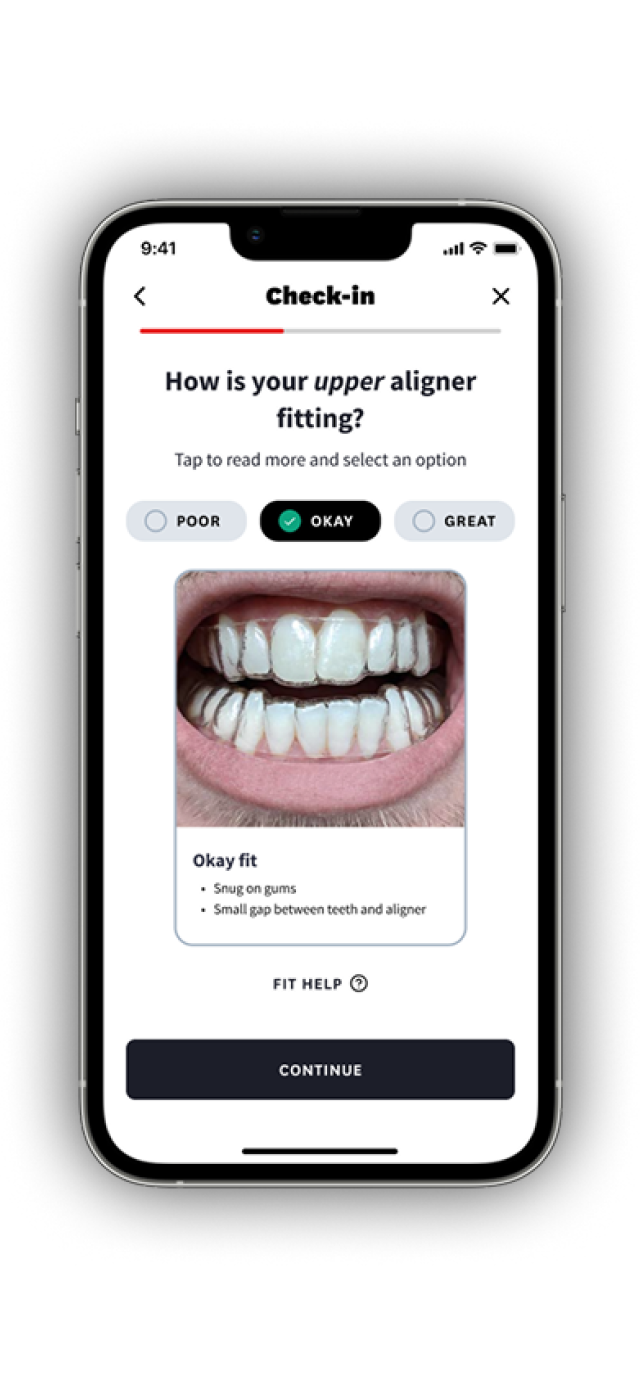

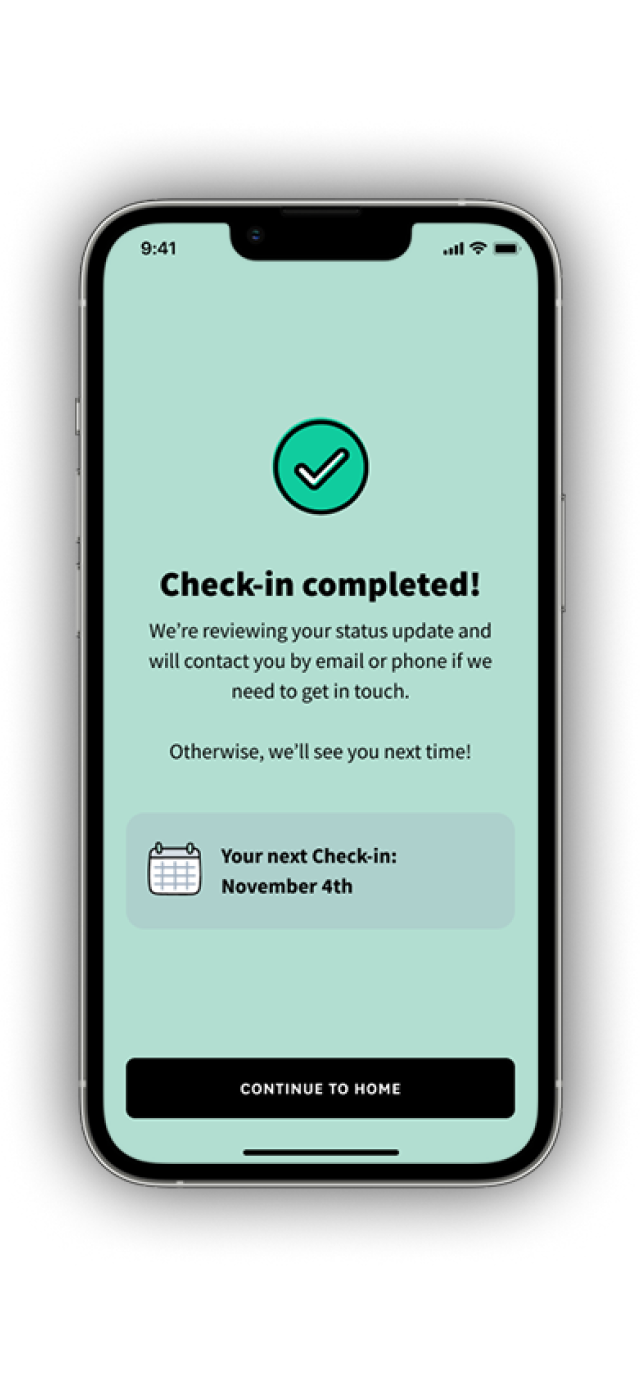

Every 25 days, Byte users were prompted to complete a Check-in: a series of questions and photos submitted through the app so our clinical team could monitor treatment progress remotely. It was our primary touchpoint for understanding how treatment was going.

And it mattered. We found that users who completed their Check-ins regularly were ~80% more likely to finish treatment on time. That correlation made Check-in one of the strongest levers we had for treatment outcomes, which directly laddered up to end-of-treatment NPS.

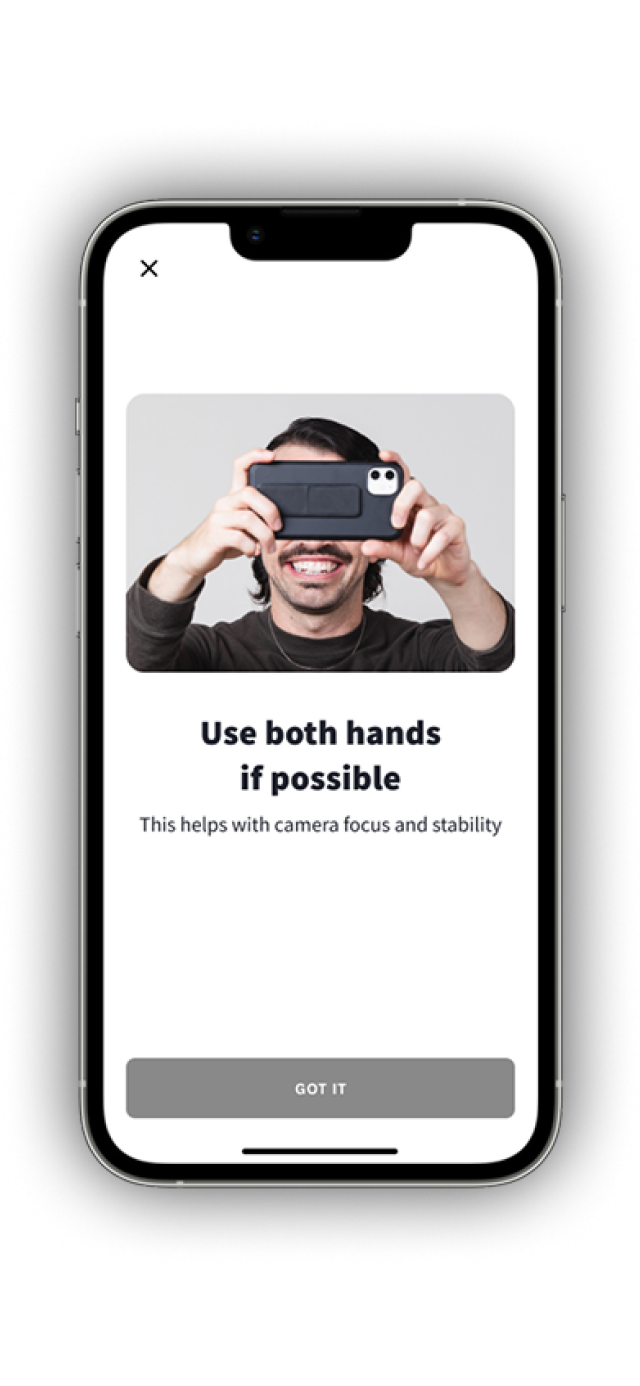

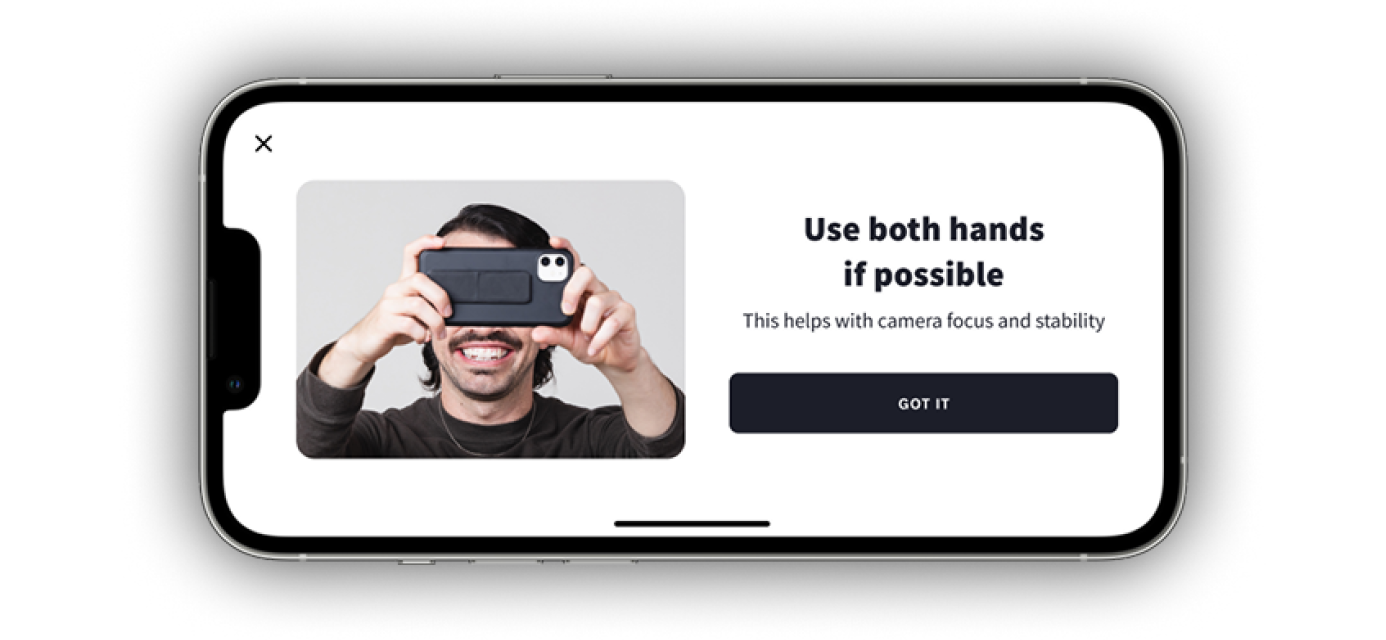

But the Check-in experience itself had friction. Users were answering questions the app already knew the answer to, struggling to describe asymmetric fit with a single dropdown, and dropping off when the camera flow asked them to photograph difficult angles. You try taking a side picture of your teeth with your phone 5 inches away! It's tough!

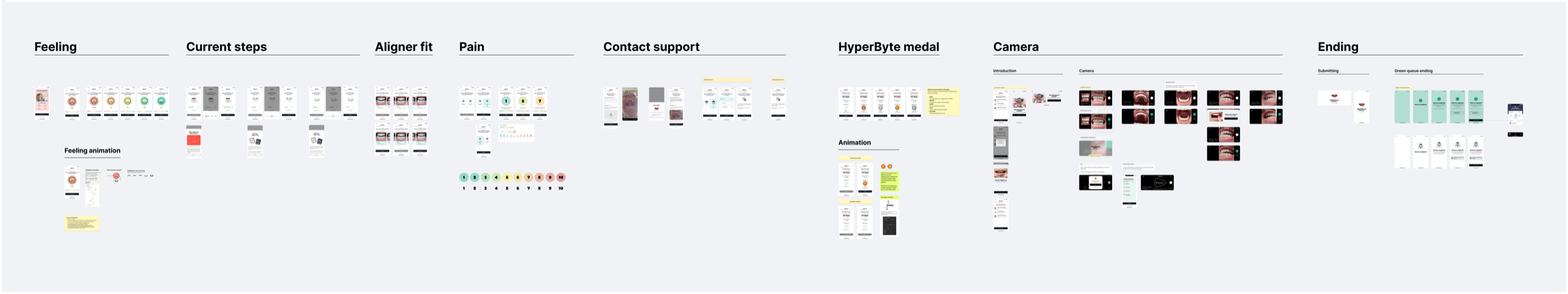

Through Continuous Discovery, we kept hearing friction points that were small individually but compounded into a frustrating experience. I led a series of iterative improvements to the Check-in, shipping changes independently as we validated them with users.